Transitioning to the “new normal” after Covid-19 related lockdowns is an interesting time for me. I don’t like change to routine and seem to be catching every bug and virus going, but do enjoy getting out and meeting real people (same people in real life!). The pacing of the year seems all out of kilter so I thought I would do a summary post of my Microsoft Certification renewals and exam passes for my own reference and as a marker of progress.

Exam administration

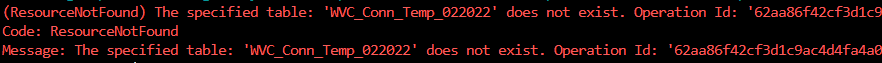

2022 was my second year of doing exams at home which still seems a new idea (I’ve been sitting Microsoft exams since 1997) but is my preferred approach now. I have a routine where I reorganise my office and the other significant improvement has been the Android App provided by Pearson Vue which simplifies the sign-on process (starting an exam at home involves verifying your identity and your examination surroundings). I had issues in the past with Edge on Android and only had one check-in this year when I had to fall back to a browser (Chrome) on my smartphone.

I tend to choose early evening for exams; I pack up after work, feed my cat and let him out and then tidy my office.

2022 was also the year when most (if not all) of the administration web pages moved to our profiles on Microsoft Learn. Although the information is in a slightly different format than before it is great from a consistency perspective and I’m happy to report that all of my profiles have reliably matched up between exam taking, vouchers, renewals and certifications.

Full Exam Progress

It took me a while to “spin up” to sitting exams this year and I didn’t pass any exams until the second half of the year. Checking back though I think I know why; I had been putting off doing the Expert examination for Microsoft 365 until I had cleared the security exams and I used the first half of 2022 to build confidence. I also failed MS-100 at my first attempt but took my own advice and re-sat it two weeks later.

I kept going and passed MS-101 the month after and an existing certification meant I acquired the expert certification as a result. This was a good milestone for me – although my focus is Azure it is really helpful to understand Microsoft 365 and I’d historically held the MCSE in Azure & Microsoft 365. Back then Microsoft Cloud was pretty much just those two – these days one could argue that Power Platform and Dynamics 365 are now mature enough to be essential reading.

Following that I looped back to security as the expert exam had been released for Cybersecurity Architects and I was very happy to get a pass for that too.

Over the year I acquired two exam vouchers from Microsoft conferences – one at Build in the spring and one at Ignite later in the year – with the first of these expiring in December 2022, the list of valid exams being quite short and that I had passed most of them I chose AI-102 and was delighted with a pass.

| Date | Exam |

| 23 June 2022 | Exam MS-100: Microsoft 365 Identity and Services |

| 20 July 2022 | Exam MS-101: Microsoft 365 Mobility and Security |

| 3 August 2022 | Exam SC-100: Microsoft Cybersecurity Architect |

| 6 December 2022 | Exam AI-102: Designing and Implementing a Microsoft Azure AI Solution |

As for actual certifications, the above exam passes were a mix of results. The current certification map appears to be shifting to single exams for Associate certifications and double exams for expert certifications but nothing like the old MCSE sets which I seem to remember needing 5 exams!

I gained two expert certifications after my above passes which built on existing Associate Certifications that I had in Security and Teams Administration and one Associate Certification as a result of the AI-102 pass.

Exam Renewals

The annual renewal process for Microsoft Certifications is really good – it is clear how the process works, the reminder process is really good and the procedure is really accessible for candidates on a budget. It is easier than the full exam but I still find it useful as a refresher on current material. Microsoft also update the learning paths and I try to do these at renewal time so I feel I am doing the renewal process justice.

I tend to do renewals as soon as possible and tend to clear them within a day or two of the window opening.

There isn’t a sharing process for renewals like the credly badge process so I tend to skip posts on my renewals and just update the dates on my LinkedIn profile so it was interesting to see how many I’d done in 2022. A top tip for planning renewals is that if you add /renew to the certification page and are logged in then the page will tell you how many days you have till the renewal window opening.

| Date | Certification |

| 6 March 2022 | Microsoft Certified: Azure Solutions Architect Expert |

| 23 April 2022 | Microsoft 365 Certified: Security Administrator Associate |

| 29 April 2022 | Microsoft 365 Certified: Teams Administrator Associate |

| 28 May 2022 | Microsoft Certified: DevOps Engineer Expert |

| 2 June 2022 | Microsoft Certified: Azure Administrator Associate |

| 28 June 2022 | Microsoft Certified: Information Protection Administrator Associate |

| 13 August 2022 | Microsoft Certified: Azure Security Engineer Associate |

| 11 October 2022 | Microsoft Certified: Azure Data Engineer Associate |

| 12 November 2022 | Microsoft Certified: Identity and Access Administrator Associate |

| 13 December 2022 | Microsoft Certified: Azure Virtual Desktop Specialty |

| 31 December 2022 | Microsoft Certified: Security Operations Analyst Associate |

2023 ?

Other than maintaining my existing certifications I don’t have any huge plans as I write but in itself this isn’t unusual, throughout my career of working with Microsoft Partners on projects things have been fairly responsive i.e. clarity builds through the year and this year we have the added factor of the introduction of Solutions Partner designations which is bedding in.

This year might see an additional effort from me to branch in to AWS Certification and possibly Security certification, this reflects the work mix I currently have with my main customer and will be an interesting change for me.